How it can read signs using OpenCV

First it must locate a sign and move to it, this is performed by following the blue color around around the signs. I'm having issues with color tracking because the AWB keeps changing the image lightning and at the moment I can't turn it off. The problem is already exposed in the raspberrypi.org forum and they are working in a solution.

When it is close to the sign it performs the next steps to read it:

1. Find image contours

Apply GaussianBlur to get a smooth image an then use Canny to detect edges. Now use this image to find countour using OpenCV method findContours. The Canny output looks like this:

2. Find approximate rectangular contours

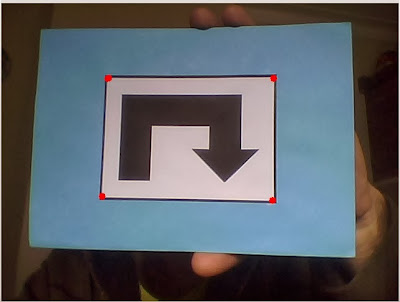

All the signs have a black rectangle around them so the next step is to find rectangular shaped contours. This is performed using approxPolyDP method in OpenCV. At this point found polygons are filtered by number of corners (4 corners) and minimum area.

3. Isolate sign and correct perspective

Because the robot moves it will not find perfectly aligned signs. A perspective correction is necessary before try to match the sign with the previous loaded reference images. This is done with the warpPerspective method. Details how to use it here:

In the next image you can see the result of the process, in "B" is showed the corrected image.

4. Binarize and match

After isolate and correct the interest area, the result image is binarized and a comparison is performed with all the reference images to look for a match. At the moment the system has 8 reference images to compare.

Comparison is performed using a binary XOR function (bitwise_xor). In the next images is showed the comparison result for match and not match, using countNonZero method is possible to detect the match sign.

Match image

Not match image

Result

This methodology works well and it is fast enough to use it in Raspberry Pi. I've tried known methods like SURF and SIFT but are to slow for real time application using Raspberry Pi.

Your robot is one of my favorite Pi projects. Please keep the updates coming (as and when you get time). Lots of unusual things like the inverted pendulum balancing and the opencv processing.

ReplyDeleteWould make an excellent kit if the cost could be kept sensible.

Also we would like to see it in the MagPi, superb project.

Hello Guy,

ReplyDeletei appreciate your work, and i hope all you can share some code.

Thank you

You work is very interesting. Thank you

ReplyDeleteCan you send the code for raspberry pi please. Thanks!

ReplyDeleteChelikanovAV@mail.ru

i very intresting to your project...can u email me the code..i hope u can help me to do my college project...

ReplyDeletefieyha5235@gmail.com

This comment has been removed by a blog administrator.

ReplyDeleteYour project is very cool and interesting.i want to make this robot as final year project at my university.,i want your help.Please send me the code...i will be very grateful to you!

ReplyDeletedaniyalahmad477@gmail.com

Your project is very interesting. I hope u can share the code. rudresh.narwal20@gmail.com

ReplyDeleteCould you please send me the code fpr my project? At shibmalyada@gmail.com it will be very helpful. Thank you.

ReplyDeleteHey can u pleassse if u want send some codes of this project to:

ReplyDeletebestdailyanime@gmail.com

have a nice day:)

Can you send the code for raspberry pi please. Thanks!

ReplyDeletemed_07.jaloul@hotmail.fr

Bonjour,

ReplyDeletepourriez-vous m'envoyer le code pour le raspberry s'il vous plait

merci

neo.aiko@free.Fr